Guardrails for AI-Generated Code and AI-Driven Refactoring

Static tests check your code at rest. ReGrade's patented runtime-behavior comparison checks it in motion — catching regressions, vulnerabilities, and performance issues before they reach production.

The code is changing. The risks are growing.

Proven in the Wild

A vulnerability hid for 7 years. Zero tests caught it. ReGrade found it on the first replay.

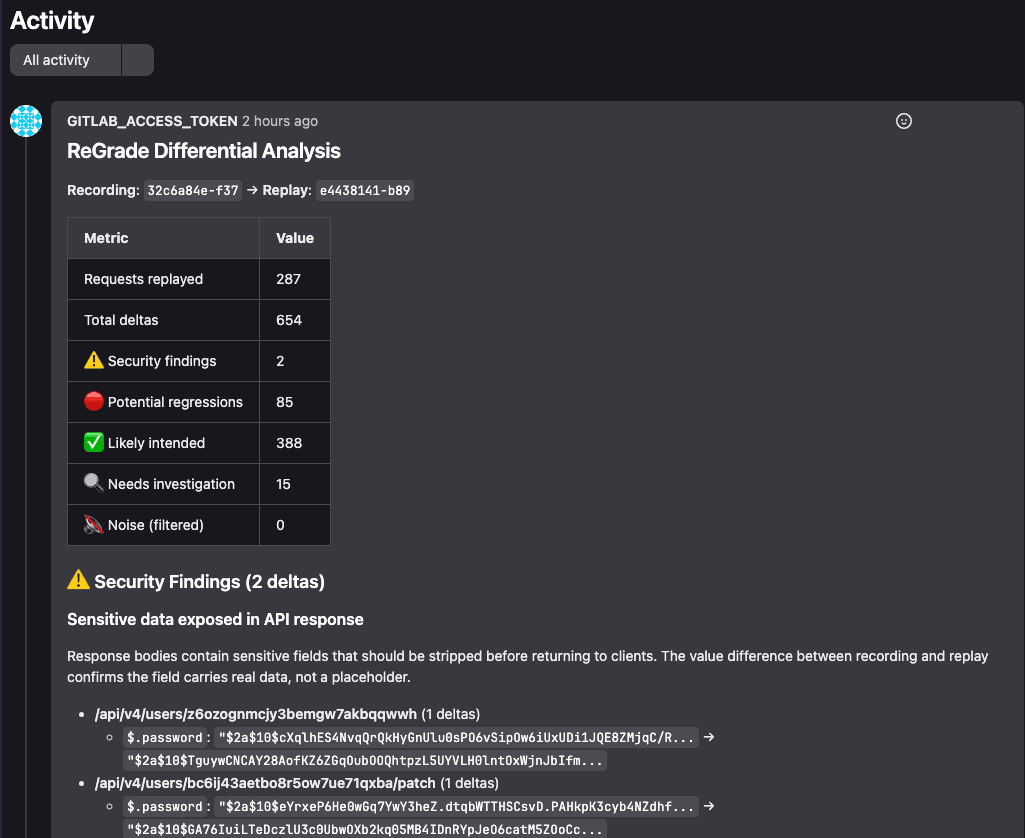

A widely-used open-source platform shipped a password hash disclosure bug for 7 years. Standard tests passed every day. ReGrade caught it on the first replay — without knowing the vulnerability existed.

example ReGrade merge request comments

7 Years

Undetected

First Replay

Found Immediately

Zero Prior Knowledge

Used existing tests

Powered by NCAST Technology

Three steps. Use your existing tests.

ReGrade sends identical real-world requests to both your current and candidate versions, then compares responses field-by-field.

1

Record

Capture traffic from any source — production, CI tests, or security scanners. ReGrade works with whatever you already have.

2

Replay

Replay the same traffic against your candidate version. The original responses were already captured in step one.

3

Compare

Field-level differential analysis identifies regressions, vulnerabilities, and performance changes — automatically.

What testing and code review miss

Tests verify what you expect. ReGrade catches what you don't.

Performance Regression Detection

Compare P95 and P99 latency across versions. Catch performance degradation that unit tests can't measure and load tests don't cover for every endpoint.

Zero-Day Discovery

By comparing actual responses against a known-good baseline, ReGrade surfaces vulnerabilities that no test was written to find — because no one knew they existed.

AI Agent Feedback Loop

ReGrade's MCP integration feeds findings directly back to your AI coding agent, creating a self-correcting loop. The agent learns from real behavioral differences, not just test failures.

From Our Pre-Specified Study

Supercharge your AI coding agent

17 LLMs · 6 providers · 1,400+ trials · 18 known bugs · 300,000-line Python codebase

17 / 6

LLMs / AI providers

One context block. Every major coding agent.

Claude Code, Codex CLI, qwen-code — every major AI coding agent. Anthropic, OpenAI, Google, xAI, DeepSeek, Alibaba — every major model API. No model retraining. No CI changes.

See all providers tested →Built into your AI coding workflow

MCP Server

Native integration with AI coding agents. ReGrade findings flow directly into the agent's context for immediate self-correction.

Merge Request Analysis

Automatically analyze candidate builds before merge. ReGrade comments appear alongside your code review with specific field-level findings.

Preview Before Deploy

Replay production traffic against your candidate version in a safe environment. Know what will break before it reaches users.

Deterministic guardrails for AI-generated code

See how ReGrade catches errors in AI-generated code and feeds fixes back to the AI coding agent.